Body gesture film editing

A system that converts the body into an editing table.

Film editing has historically been a mental and linear process—an operation of selection, rhythm, and decision that occurs in front of a screen and a timeline, with hands on a keyboard.

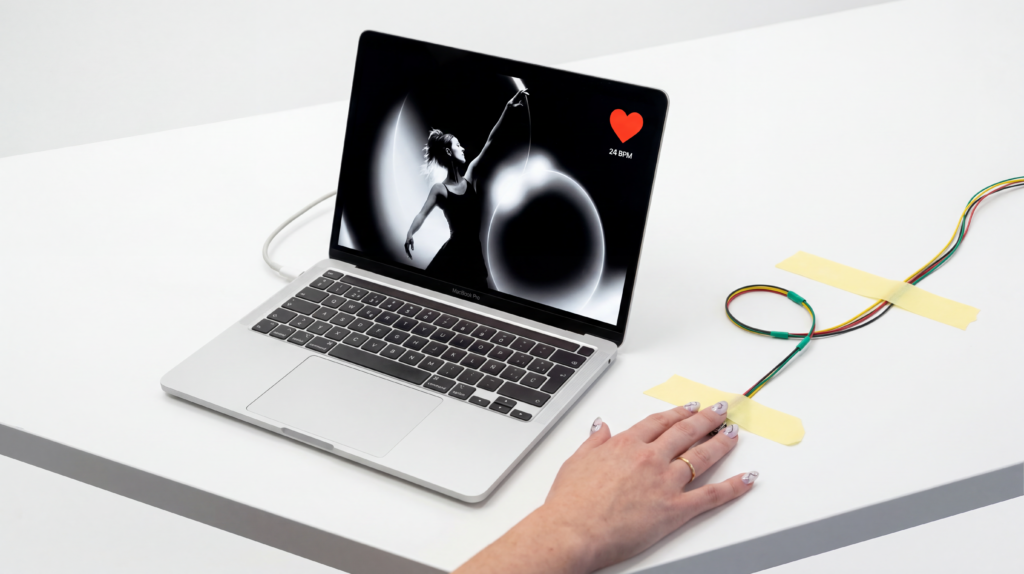

Kinesthesia starts from a question that arose in a workshop: can editing also be a physical process? Can the body, its posture, its movement in space, become the instrument with which a film is assembled? This prototype is the experimental response to that question. Developed as a research laboratory following an Artefacto workshop, the system allows a person to edit and control the reproduction of moving images solely with body gestures, without a keyboard, without a mouse, without a conventional graphic interface.

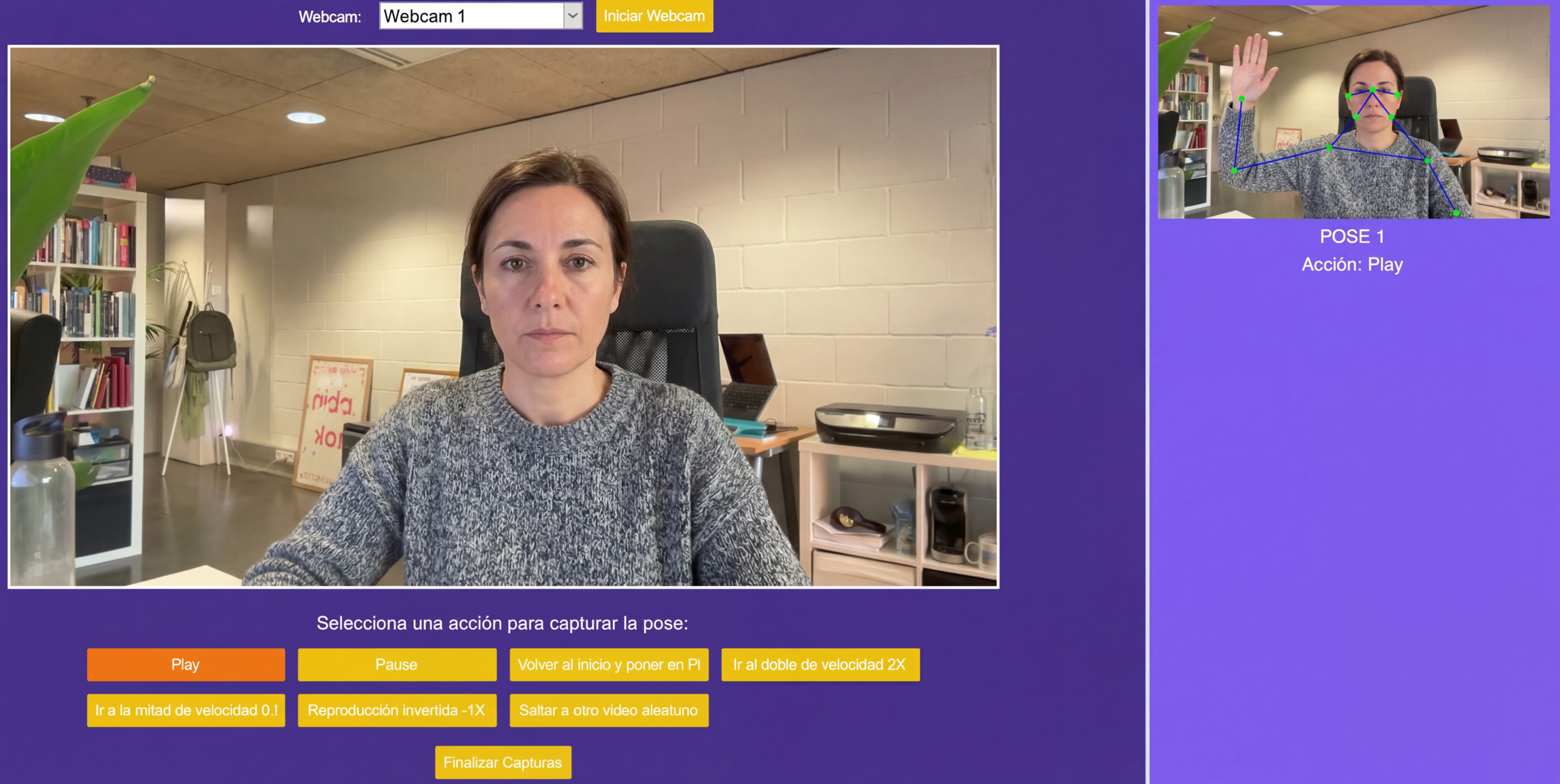

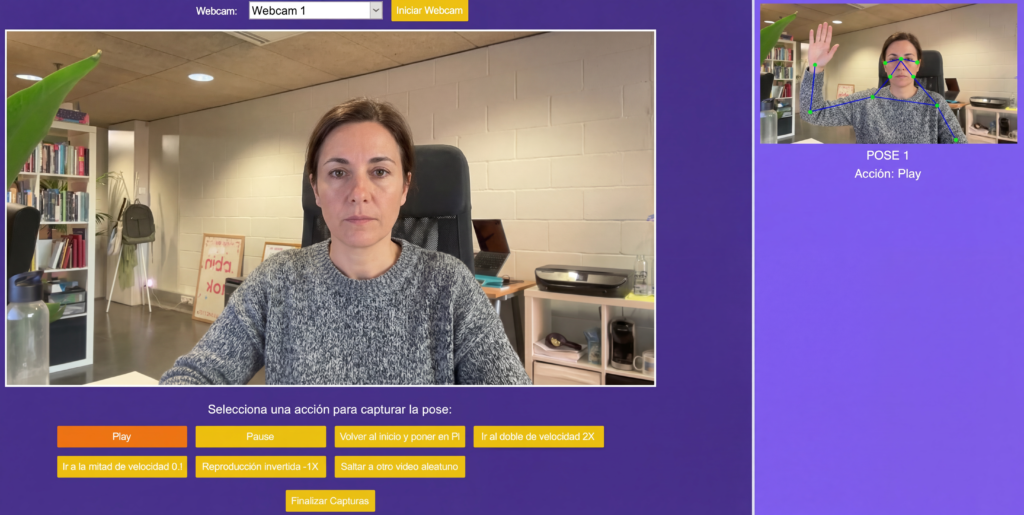

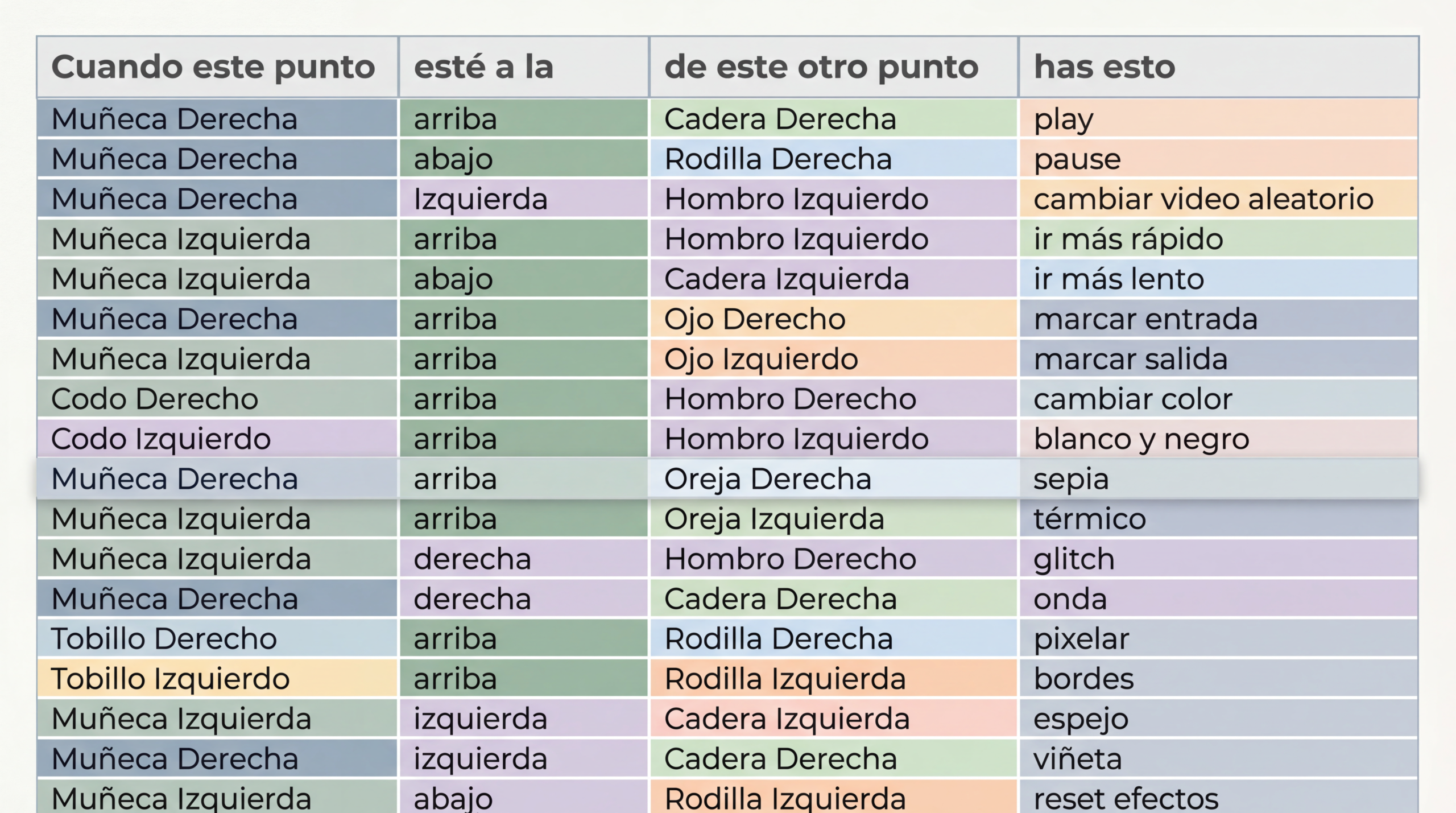

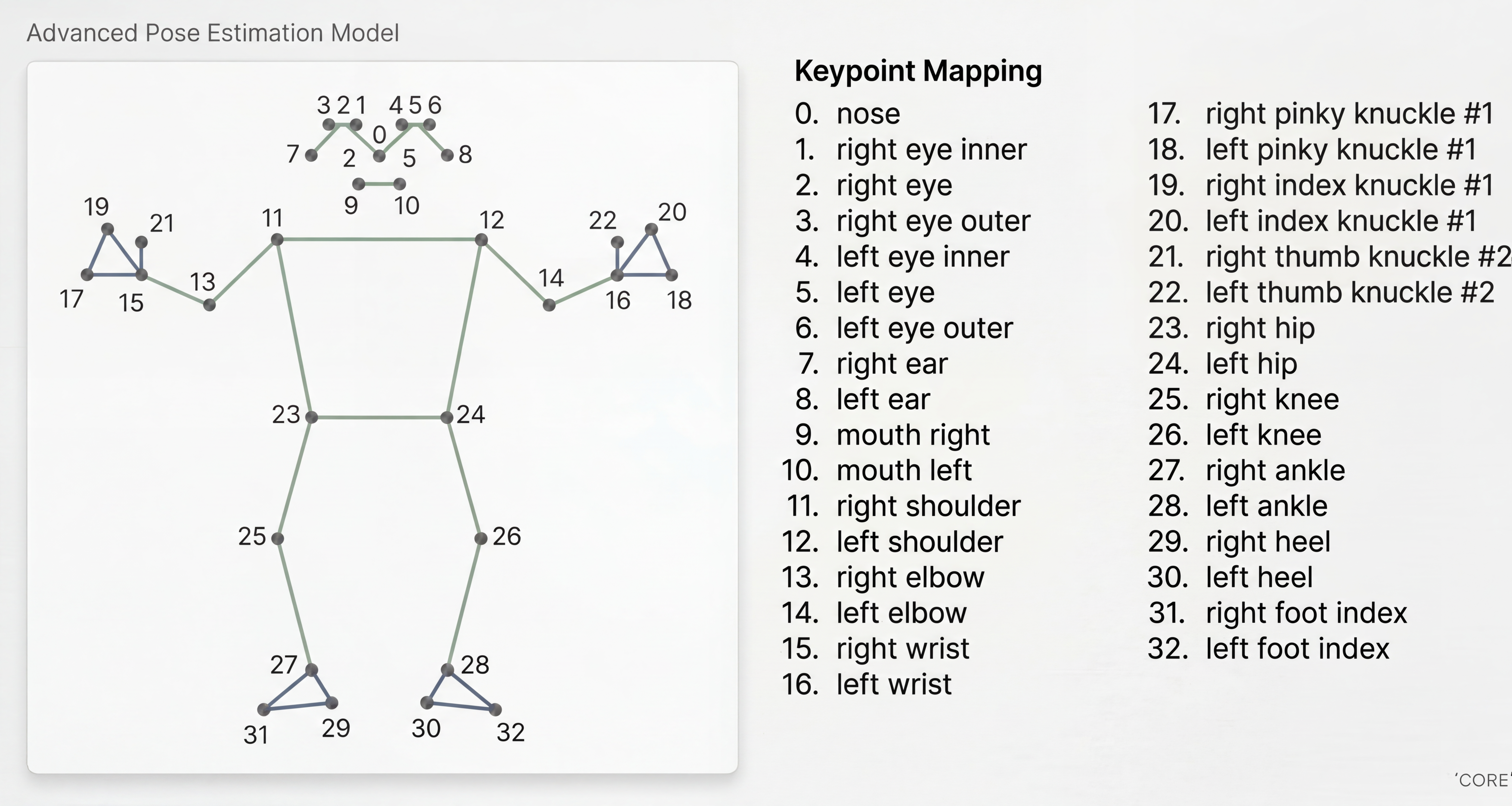

The system works through MediaPipe Pose, the body pose detection model developed by Google. The underlying architecture is BlazePose GHUM 3D, a model optimized for real-time inference that detects 33 anatomical points of the human body, from the nose to the toes. Each of these points, called landmarks, represents a spatial coordinate that the model calculates from a webcam feed. The inference is executed via TensorFlow Lite with an XNNPACK delegate, allowing the entire process to run on a CPU without the need for specialized hardware. Based on this point representation, the system evaluates spatial relationships between specific landmarks. For example: if the left wrist is above the left hip, playback is activated; if it descends below the knee, the video pauses… Each gestural condition and its associated action is defined in a Google Sheets file, which converts that spreadsheet into a choreographic score and a no-code programmable interface.

Visual video processing runs on OpenCV and MoviePy, which allow applying real-time effects and building the final edit from the timecodes marked with the body.

The question organizing Kinesthesia has roots in debates that cinema has not fully resolved for decades. When Eisenstein described montage as collision, or when editors of the Soviet tradition spoke of rhythm as something almost muscular, they pointed to a bodily dimension in film decision-making that digital technology tended to make invisible.

The workshop from which this prototype emerged challenged participants to think about editing from posture, from balance, from physical distance to the screen. Kinesthesia translates that experience into a functional system and poses questions about the nature of technical authorship: when editing instructions live in a shared spreadsheet and the gesture that activates them is that of any body present, who edits the film?

Within Artefacto’s research, Kinesthesia operates as a laboratory on the limits between interface, body, and narration.

The complete flow, from body posture to the exported video file, works without manual intervention on traditional input devices. The system is designed to continue being modified: the gesture map in Google Sheets can be rewritten, source videos can be changed, and available visual effects—ranging from color inversion to sinusoidal distortion—can be activated or deactivated according to the proposal of each session.

Kinesthesia is an open instrument to continue exploring if cinema can be edited with the entire body.